CUBIC's Idle Optimization Causes QUIC Death Spiral at Cloudflare

Cloudflare engineers traced a 61% test failure rate to a subtle bug in their CUBIC congestion controller implementation. The bug, ported from a 2017 Linux kernel fix, causes the congestion window (cwnd) to lock at its 2700-byte minimum when the connection enters a specific recovery oscillation.

The Test That Failed 61% of the Time

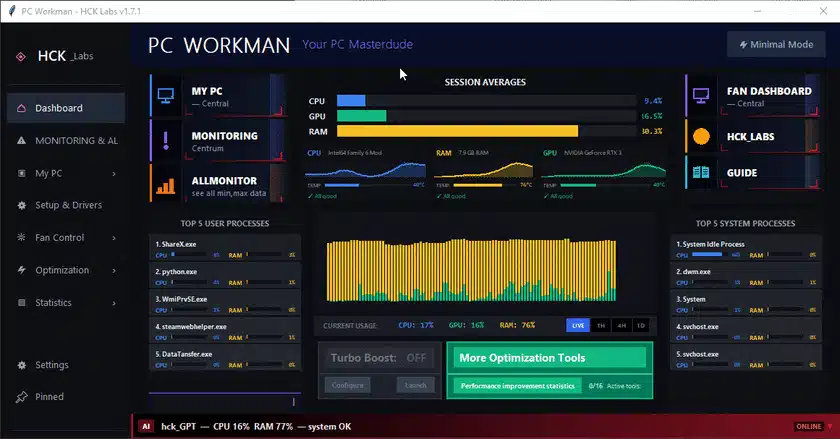

The integration test simulated a 10MB HTTP/3 download over localhost with 10ms RTT. For the first two seconds, 30% random packet loss was injected. After two seconds, loss stopped entirely. With Reno, the test passed 100% of the time. With CUBIC, 61 out of 100 runs failed to complete within the 10-second timeout—even though the download should finish in 4-5 seconds.

The Oscillation: 999 State Transitions in 6.7 Seconds

Instrumentation revealed that after the loss phase ended, CUBIC's cwnd stayed pinned at the minimum floor of 2700 bytes (two full-sized packets). The congestion state machine oscillated between recovery and congestion avoidance 999 times over 6.7 seconds—one transition every ~14ms, matching the connection's RTT.

Root Cause: Porting a Kernel Fix to User-Space QUIC

The bug originates from a 2017 Linux kernel change (commit by Eric Dumazet, Yuchung Cheng, Neal Cardwell) that adjusted CUBIC's epoch_start after idle periods. The kernel fix shifted the epoch forward by the idle duration to prevent cwnd inflation. When Cloudflare ported this to quiche in 2020, they used the on_packet_sent() callback to detect idle:

// cubic.rs — on_packet_sent() (simplified)

fn on_packet_sent(&mut self, bytes_in_flight: usize, now: Instant, ...) {

if bytes_in_flight == 0 {

let delta = now - self.last_sent_time;

self.congestion_recovery_start_time += delta;

}

self.last_sent_time = now;

}

This logic has a flaw: congestion_recovery_start_time is normally set during ACK processing, not at send time. Adding the idle delta at send time can push the recovery start time into the future, causing CUBIC to misinterpret the connection state.

The Self-Perpetuating Trap

The bug triggers only when three conditions align:

- A real loss event sets the recovery boundary

- The connection is in congestion avoidance

- cwnd has collapsed to the two-packet minimum

At minimum cwnd, every ACK cycle drives bytes_in_flight to zero. The on_packet_sent() check then advances congestion_recovery_start_time by the full RTT each time, creating a feedback loop: the recovery state never ends, so cwnd never grows.

The Fix: A One-Line Change

The Cloudflare team fixed the bug by resetting congestion_recovery_start_time only when the connection has actually been idle long enough to warrant a reset, rather than on every send when bytes_in_flight == 0. The exact fix is described as an elegant near-one-line change that breaks the oscillation cycle.

Why This Matters for QUIC Implementations

This bug highlights the dangers of porting kernel-level TCP optimizations to user-space QUIC stacks. The kernel has CA_EVENT_TX_START callbacks that QUIC lacks, forcing implementers to approximate idle detection with bytes_in_flight == 0 checks. As Cloudflare notes, outside the minimum-cwnd regime the bug is invisible—it only surfaces under heavy loss scenarios that drive cwnd to its floor.

Lessons for Developers

- Test congestion controllers at minimum cwnd, not just steady state

- Be wary of porting kernel TCP optimizations to user-space QUIC without adapting the idle detection mechanism

- Instrument state transitions: the 999 oscillations were invisible without qlog visualization

Cloudflare has merged the fix into quiche. The full analysis is available on their blog.