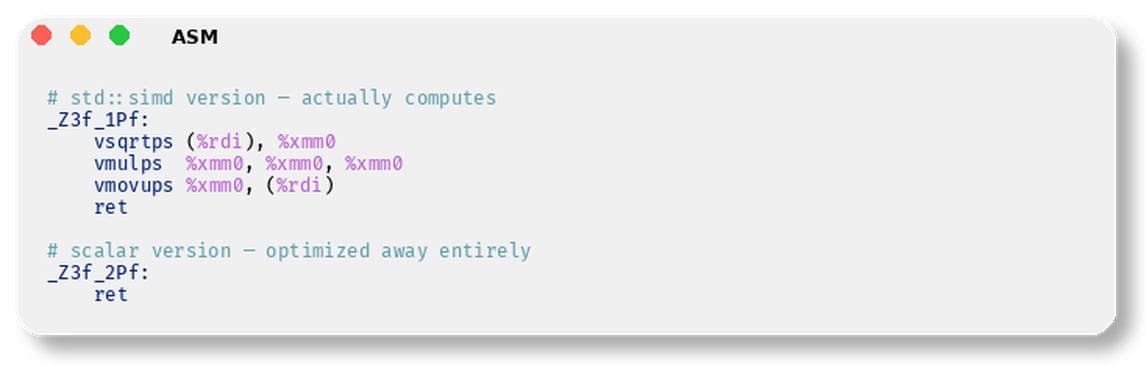

C++26 ships with std::simd (P1928), a library-based portable SIMD abstraction. The pitch: write SIMD code once, compile for AVX2, AVX-512, NEON, SVE. No more #ifdef __AVX512F__ spaghetti. Just std::simd and let the compiler figure it out. But benchmarks and a satirical repository by NoNaeAbC show real deficiencies: 10x slower compilation, worse performance than scalar loops, wrong default vector width, and inability to express essential SIMD operations. The compiler's auto-vectorizer beats it on every metric.

A Decade in Committee

std::simd started with Matthias Kretz's Vc library (2009-2010) for high-energy physics simulations at CERN. The proposal P0214 appeared around 2016, went through nine revisions, and was published as part of Parallelism TS 2 (ISO/IEC TS 19570:2018). GCC 11 shipped an experimental `` in 2021. Then P1928 promoted it into C++26—after nearly a decade of committee discussion. During that decade, auto-vectorizers in GCC, Clang, and MSVC improved enormously. ISPC proved language-level SIMD generates better code. ARM SVE challenged fixed-width abstractions. -march=native matured so scalar loops auto-vectorize to the widest registers. std::simd is a 2012 solution arriving in 2026.

The Competition Didn't Wait

Google Highway is the most serious competitor: performance-portable, length-agnostic SIMD with runtime dispatch. It detects CPU at runtime and dispatches to SSE4, AVX2, AVX-512, or NEON/SVE without recompilation. std::simd has no runtime dispatch. Highway is length-agnostic, working naturally with ARM SVE's scalable vectors. Adoption: Chromium, Firefox, JPEG XL, libaom, Jpegli, libvips. When Google needed portable SIMD for production codecs, they built Highway—not std::simd. However, Highway's API is verbose (tag-dispatched hn::Mul(d, a, b) instead of operator overloads) and requires HWY_DYNAMIC_DISPATCH macros that fragment code.

SIMDe (SIMD Everywhere) takes a different approach: portable implementations of intrinsics. Write _mm256_shuffle_epi8() and it works on ARM via NEON/SVE. Existing intrinsics gain portability without rewrite. But it locks you into Intel's mental model, and some x86 operations decompose into multiple NEON instructions with overhead.

xsimd covers SSE through AVX-512, NEON, SVE, WebAssembly SIMD, Power VSX, and RISC-V vectors. It's the SIMD backend for xtensor, but shares library-level optimizer opacity. Documentation is thin, community small.

EVE (Expressive Vector Engine) by committee participant Joel Falcou is a C++20 rewrite of Boost.SIMD. It covers SSE2 through AVX-512, NEON, ASIMD, SVE with fixed sizes. But it's still a library-based approach with optimizer opacity, no runtime dispatch, limited SVE support, and no Visual Studio support. Its README calls it "a research project first" and hasn't reached 1.0. No major production users.

Agner Fog's Vector Class Library (VCL) is thin wrappers around intrinsics, x86-only, one-person project. Dead end for ARM.

ISPC solves the problem at the language level—separate compiler with its own syntax, generating better code for control-flow-heavy SIMD. But requires separate build step, debugging, and C ABI interop.

Compile-Time and Runtime Benchmarks

Including `` pulls in deeply nested template machinery. A trivial sin on a SIMD vector takes ~2.2 seconds to compile. The equivalent scalar for-loop? 0.2 seconds—a 10x penalty per translation unit. In a trading system with hundreds of translation units, that's minutes of wasted build time. And the code runs slower than scalar loops anyway.

The template-heavy implementation also produces atrocious error messages. Using std::simd with a where() expression yields 138 lines of template instantiation errors referencing internal types like _SimdWrapper<_Float16, 8, void>.

What Should You Do?

If you need portable SIMD today, use Highway for production codecs and image processing, ISPC for control-flow-heavy kernels, or SIMDe for migrating x86 intrinsics to ARM. Avoid std::simd until compiler implementations mature and the compile-time penalty is addressed. The committee spent a decade on a library abstraction that the ecosystem has already surpassed.